Does the world seem like it’s burning? When you scroll through your social media, do you feel like things are getting worse? Are there times when your social media feed is filled with anger, hate and despair? Have you you felt the need, or seen another person, crusade on social media trying to stoke up anger and urgency about a situation? If you have, there’s a chance that you or that person has been manipulated by AI.

A lot of internet sources that tell you that something is bad usually look for someone to point a finger at. Here I’m not going to do that. I don’t know what goes on behind closed doors at Google, YouTube, or Facebook. Considering the human psychology and way in which machine learning algorithms operate, it’s entirely possible that this happened by accident. What’s important is moving forward as a society and preventing further damage. Witch hunting, claiming to read the intent and minds of others is a waste of your time.

Now, let’s look at the basics of a machine learning algorithm. It’s essentially a regression algorithm for a mathematical model. This means that it iterates over itself to find a solution that has the minimum error. We can see this with the most basic machine learning model for binary outcomes, the logistic function:

The result of the logistic function is between 0 (0%) and 1 (100%). Each arrow going into the h node is a weight which multiplies the input value. So, if we’re going to predict cardiac arrest, the machine learning algorithm could tune the weights for x0, x1, and x3 until we have the least error. So if we train it on:

x0 = age

x1 = gender (1 if female => 0 if male)

x2 = history of cardiac arrest (1 if true => 0 if not)

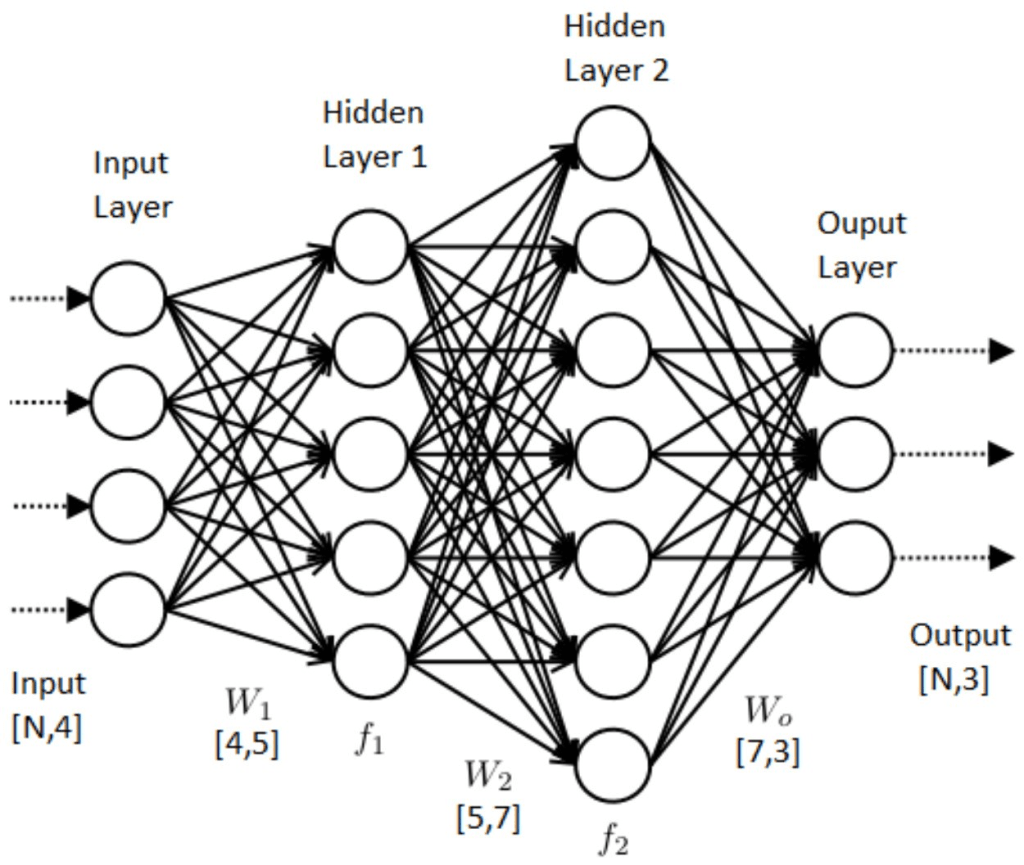

We can put those inputs into the trained model to get a probability of a cardiac arrest for a patient (granted probably not an accurate one). You then make a decision on what the cut off percentage in relation to how many false positives and negatives you can afford to have. A neural network is what we discussed above on steroids:

Each arrow is a weight tuned by the machine learning algorithm and each node is a math function (logistic can be used but there’s also others). The reason why I’m showing you this is because I want you to understand that machine learning in general is just a mathematical model with weights to try and make predictions. It doesn’t understand anything. For instance, if we try and train a neural network to diagnose a disease that is only prevalent in 1% of the population, it would achieve a 99% accuracy very quickly by just saying no to every patient it sees. You basically have to copy and paste positive patients in the training data (not the testing data) until you get a 50/50 split to prevent the AI from doing this. This is also the case for social media. Algorithms are merely there to increase your engagement with the site. It’s a one if you click on the ad, a zero if not. Time spent, and clicks is what the algorithm is trying to optimise, it doesn’t care or understand the ramifications of its actions. Again, we can’t speculate on what sites like Google and Facebook are doing. I’m sure they’re doing much more advanced algorithms than the neural network I just described. However, to my knowledge at this point in time neural networks are still black boxes. We don’t know what’s going on under the hood when we ask a neural network to make a prediction/suggestion.

So, back to you. When you’re using a website, the algorithm is designed to try and keep you engaged. It will serve you content that will entice you to keep watching, clicking and typing. We could take comfort that the algorithm only cares about these metrics, however, sadly, due to human psychology, this is actually a curse. Our brains have a negative bias [link]. We’re more prone to react to negative stimuli. When we do react, if the stimuli is negative, our reaction is stronger. This biological flaw combined with the algorithms’ cold approach to ruthless optimisation forms a perpetual positive feedback mechanism of negativity. The algorithm is rewarded more when it serves you something negative as you type, click and watch more. Its weights are adjusted, and it feeds you more media of that category.

If you want to see this in action, click on a political video on youtube, turn the sound off, click the autoplay on, and then go to bed. Go to work the next day and then see what youtube is showing you when you get back. It will be weird. When I did it, the result was loads of cut clips of one political side beating up members of the other political side. Whats happening is that you’ve condensed a long time into a couple of days. You were not there for the ride so your mental window didn’t shift with the videos. The algorithm thought that you were watching a video for the full length, therefore it suggested a video that others watched all the way that also watched the full video you just watched. It’s harrowing because you get to see the end result without the slow conditioning.

Ok, so algorithms don’t really understand anything past the metrics, and our biology encourages the algorithm to serve us negative things. However, we’re smart right? We can see through the lies. I’m pretty sure you can remember a story where you corrected a friend on social media who posted something you knew was false. Don’t kid yourself that you’re an exception. The reality is that you’re probably just as duped as they are. To demonstrate this, let’s run a classic banking scam. We can email thousands of people with the claim that we have a system that predicts stocks. To prove it, we also include a stock prediction in the email. Now, the truth is that we don’t have a system. The stock prediction is just a random prediction. To one we say stock X will go up, to another stock Y will go down. Because we’ve blasted out thousands of random predictions, just out of sheer numbers, some will be right. We do this every month for a year. Some will get 12 months of correct predictions. Now, if they knew our fail rate they’d run. However, they don’t. They’ve just seen you knock it out of the part 12 months in a row.

With the amount of data online you can be fed facts that are not technically lies, but don’t give the full picture. The amount of data available enables the algorithm to feed you a tormenting narrative all day every day. You will succumb to data fatigue. In this sense we frankly need to get over ourselves. It’s not about how smart you are, it’s that you are just not going to have the time to verify the high flow of data thrown at you. The dark psychological effects on democracy and health is covered by psychology professor Jonathan Haidt [link].

So what are we to do? Should be banish AI under the fear that it will drive us to division and insanity? No. AI has done a lot already for society and has the potential to keep contributing. However, like the motorcar, certain regulations need to be put in place. We didn’t know what would happen when we put AI to tasks that had an effect on human mental health. However, with whats happening now, like when drink driving and seatbelts became law, we need to prohibit AI when it plays a role in manipulating human behaviour.

What can you do right now? I myself know I have limits with my human brain. I enjoy social media. But, I have hard checks in place to prevent me from being completely mashed by a machine that never tires. I don’t have the Facebook app on my phone. I use Facebook, but I don’t need reminders pinged to be, and I don’t want to casually scroll the feed when I find myself idle for a minute or two. I separate my social media. Politics on Facebook? Yeah sure. However, I see any politics on my Linkedin I block it. Having it forced down your throat everywhere you look isn’t healthy. There are some nut jobs to are so unhinged that they might feel strongly about me doing this but it’s like oxygen on a flight. If I’m driven to being an irrational head case what use am I to anyone? I subscribe to slow news. You have to pay for it but it waits for all the facts to come out before reporting on it. Considering the data limit to my brain I’ve found it to be a good investment. I also read a lot of books. I find the material in a book to be of higher effort as opposed to a hack journalist or professional trying to ride the current wave for likes. Whilst doing this I force myself to read books from positions I don’t agree with. It’s uncomfortable and sometimes painful. However, it’s essential. Could you imagine a runner wanting to improve but never wanting to feel discomfort?